The Maths Behind the Machine Without the Notation

My notes from working through the mathematical intuition behind how ML models learn. I skipped formal notation deliberately - the ideas are accessible without it, and the symbols tend to obscure rather than clarify at this stage. The lab builds linear regression and gradient descent from scratch in Python with a matplotlib visualisation of the learning process.

Where I Started

When I first tried to understand how ML models learn, most explanations split into two useless extremes: either "the model trains on data and gets better" with no further detail, or a wall of calculus notation that assumes you already know what a partial derivative is.

Neither helped. What I actually needed was the mechanism - the specific thing the model does, step by step, to improve. It turns out the idea is simple. The notation around it is what's intimidating, not the concept itself.

The version I'll use to explain it is linear regression with gradient descent. It's the smallest possible example where the full learning loop is visible: the model makes a prediction, measures how wrong it is, and adjusts itself. Everything in more complex models is a scaled-up version of that same loop.

Linear Regression - What It Actually Is

Linear regression is a model that predicts a number by finding the straight line that best fits a set of data points.

The example I used to think through it: a dataset of flat sizes in square metres and their sale prices. Plot them - size on the horizontal axis, price on the vertical. Linear regression finds the straight line through that scatter of points that minimises the total gap between the line and the actual values.

Once you have the line, prediction is just reading off the graph. Give it a flat size it's never seen before, and it tells you where that size sits on the line.

The line itself is defined by two numbers. The slope controls how steeply it rises - in this example, roughly how much each additional square metre adds to the price. The intercept is where the line sits when size is zero - often a number without a clean real-world meaning, but it sets the vertical position of the line correctly.

Those two numbers - slope and intercept - are what the model is trying to find. "Training" the model means searching for the values of slope and intercept that make the line fit the data as well as possible.

What Loss Means

To search for better values, the model needs a way to measure how wrong its current values are. This is called the loss - a single number summarising the total error across all data points.

The calculation is straightforward: for each data point, take the difference between what the line predicts and what the actual value is. Square that difference (this handles the sign problem - without squaring, positive and negative errors cancel each other out and the total looks artificially small). Add them all up. That's the loss.

Lower loss means the line fits the data better. The goal is to find the slope and intercept that push the loss as close to zero as possible.

This is not a machine learning concept specifically. It's just measuring how wrong you are with a formula that doesn't let positive and negative errors cancel. The ML-specific part is what happens next - how the model uses that number to improve.

Gradient Descent - How the Model Improves

Gradient descent is the process of using the loss to update the model's parameters.

The analogy I keep coming back to: you're standing somewhere in a hilly landscape in thick fog. You can't see the terrain ahead, but you can feel the slope under your feet. If you take a small step in the direction that feels downhill, you'll be slightly lower. Do that repeatedly and you'll eventually reach a valley - a point where every direction feels uphill, meaning you can't improve further.

In the model, the landscape is the loss plotted against all possible combinations of slope and intercept. The valley is where the loss is minimised. Gradient descent navigates toward it by asking, at each step: given the current slope and intercept, which small adjustment reduces the loss?

The answer to that question is the gradient - a measure of which direction is uphill at the current position. The model moves in the opposite direction. That's the update. Repeat it enough times and the model converges on the slope and intercept that fit the data best.

The Learning Rate

Each step has a size, set by a value called the learning rate. I spent more time understanding this than anything else in this section, because it's deceptively important.

Set the learning rate too large and each step overshoots the valley. Instead of settling at the bottom, the model bounces back and forth across it - or misses entirely and climbs the other side. The loss goes up instead of down. Set it too small and the steps are so tiny that the model takes an impractical number of iterations to converge, and may plateau well before finding the minimum.

There's no formula for the right value - it depends on the data and the model. In practice it's chosen by experimentation, which is one of the reasons training ML models involves more craft than the "just run it" framing implies. The learning rate experiment at the end of the lab makes this concrete.

Why This Generalises

Linear regression has two parameters. A large language model has tens of billions. The learning mechanism is the same.

When people say a model was "trained for 100 billion tokens" they mean gradient descent ran on that much data - the model made predictions, measured loss, and updated its parameters, over and over, at massive scale. When people mention "learning rate schedules" they mean the learning rate was adjusted during training - typically starting small, increasing, then decreasing again - to manage the stability problems that come with large models and large datasets.

Once the core loop is clear, most of the vocabulary around model training stops being opaque. The engineering complexity is real, but it's wrapped around an idea that's genuinely straightforward.

The Security Angle - What Gradient Descent Makes Possible for Attackers

Gradient descent learns from data. That's exactly where it can be manipulated.

If an attacker can place crafted examples into the training data - even a small fraction of the total - they can steer what the model learns. This is a data poisoning attack. The model trains normally on what looks like a legitimate dataset. The poisoned examples shift the loss landscape in a direction the attacker chose. The model adjusts accordingly, and the resulting behaviour reflects the attacker's intent without any visible sign of compromise during training.

Demonstrated attacks include image classifiers that misidentify specific targets, spam filters that consistently pass certain senders, and recommendation systems with hidden biases. The training process itself gives no warning - the loss decreases normally, the model appears to converge, and the problem only surfaces at inference time when the right trigger is present.

The implication I find most worth holding onto: the trustworthiness of a trained model is directly tied to the trustworthiness of its training data. "We trained on a large dataset" says nothing about whether that dataset was clean. Data provenance matters, and it's often the last thing documented.

Lab: Linear Regression and Gradient Descent from Scratch

Everything here is implemented without scikit-learn or numpy for the maths - just Python and matplotlib. The goal is to make every calculation visible rather than delegating to a library.

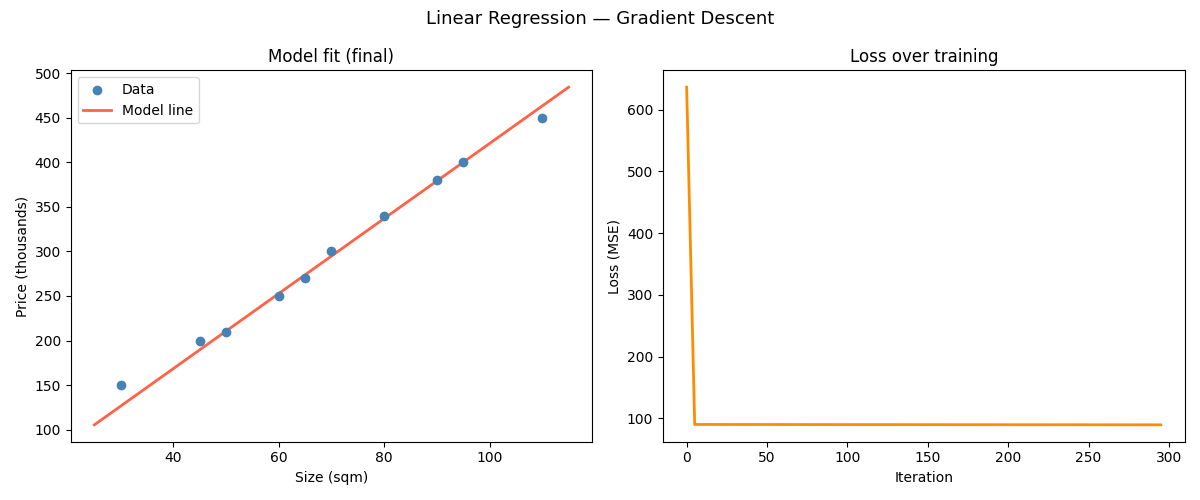

The output is a two-panel animation: the regression line adjusting in real time on the left, and the loss curve falling on the right. Watching both together is the clearest way I found to understand what convergence actually looks like.

Setup

# Install matplotlib

pip3 install matplotlib --break-system-packages

# Create script file

touch regression.pyOpen the file:

nano regression.pyStep 1 - The Dataset

import matplotlib.pyplot as plt

import matplotlib.animation as animation

# Synthetic dataset: flat size (sqm) vs sale price (thousands).

# Made up numbers, but the pattern is plausible.

# In a real problem this would come from a file.

sizes = [30, 45, 50, 60, 65, 70, 80, 90, 95, 110]

prices = [150, 200, 210, 250, 270, 300, 340, 380, 400, 450]

# Training history - stored so the animation can replay it afterwards.

history = []Step 2 - The Loss Function

def compute_loss(sizes, prices, slope, intercept):

"""

Mean squared error - average of (predicted - actual)^2.

Dividing by n keeps the loss scale consistent with the averaged

gradients in gradient_step below. Lower is better.

"""

n = len(sizes)

total_error = 0

for size, price in zip(sizes, prices):

predicted = slope * size + intercept

error = predicted - price

total_error += error ** 2

return total_error / nStep 3 - One Gradient Descent Step

This is the update. For each parameter, compute how sensitive the loss is to a small change in that parameter, then move it in the direction that reduces the loss.

def gradient_step(sizes, prices, slope, intercept, learning_rate):

"""

One gradient descent update for slope and intercept.

For each parameter, the gradient tells us:

if we nudge this slightly, does loss go up or down, and by how much?

We move each parameter against its gradient - downhill.

"""

n = len(sizes)

slope_gradient = 0

intercept_gradient = 0

for size, price in zip(sizes, prices):

predicted = slope * size + intercept

error = predicted - price

# Sensitivity of loss to slope at this data point

slope_gradient += (2 / n) * error * size

# Sensitivity of loss to intercept at this data point

intercept_gradient += (2 / n) * error

# Move each parameter downhill by one step

new_slope = slope - learning_rate * slope_gradient

new_intercept = intercept - learning_rate * intercept_gradient

return new_slope, new_interceptStep 4 - Training Loop

def train(sizes, prices, learning_rate=0.0001, iterations=300):

"""

Run gradient descent for a fixed number of iterations.

Start from slope=0, intercept=0 - a flat line at zero.

Record snapshots for the animation.

"""

slope = 0.0

intercept = 0.0

for i in range(iterations):

slope, intercept = gradient_step(

sizes, prices, slope, intercept, learning_rate

)

loss = compute_loss(sizes, prices, slope, intercept)

# Record every 5th step for a smooth animation

if i % 5 == 0:

history.append((slope, intercept, loss, i))

# Print progress every 50 iterations

if i % 50 == 0:

print(f" Iteration {i:>4} | loss: {loss:>10.2f} | "

f"slope: {slope:.4f} | intercept: {intercept:.4f}")

return slope, interceptStep 5 - Visualisation

def animate_training(sizes, prices, history, slope, intercept):

fig, (ax_fit, ax_loss) = plt.subplots(1, 2, figsize=(12, 5))

fig.suptitle("Linear Regression — Gradient Descent", fontsize=13)

ax_fit.scatter(sizes, prices, color="steelblue", zorder=5, label="Data")

x_line = [min(sizes) - 5, max(sizes) + 5]

y_line = [slope * x + intercept for x in x_line]

ax_fit.plot(x_line, y_line, color="tomato", linewidth=2, label="Model line")

ax_fit.set_xlabel("Size (sqm)")

ax_fit.set_ylabel("Price (thousands)")

ax_fit.set_title("Model fit (final)")

ax_fit.legend()

all_iters = [h[3] for h in history]

all_losses = [h[2] for h in history]

ax_loss.plot(all_iters, all_losses, color="darkorange", linewidth=2)

ax_loss.set_xlabel("Iteration")

ax_loss.set_ylabel("Loss (MSE)")

ax_loss.set_title("Loss over training")

plt.tight_layout()

plt.savefig("regression.png")

# --- Run ---

print("Training...\n")

final_slope, final_intercept = train(sizes, prices)

print(f"\nFinal model: price = {final_slope:.2f} * size + {final_intercept:.2f}")

test_size = 75

predicted_price = final_slope * test_size + final_intercept

print(f"Predicted price for {test_size} sqm: £{predicted_price:.1f}k")

print("\nGenerating animation...")

animate_training(sizes, prices, history, final_slope, final_intercept)

Running It

cd ~/ai-labs/post-02

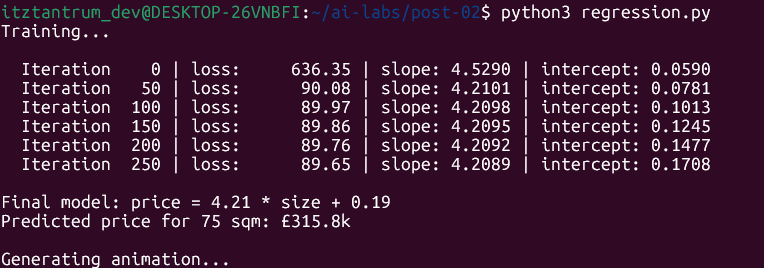

python3 regression.pyTerminal Output

The loss drops from ~636 at iteration 0 to ~90 by iteration 50 - most of the learning happens in the first few dozen iterations. After that it barely moves. That's convergence: the model has found a slope and intercept that fit this data about as well as a straight line can, and further steps produce negligible improvement.

What the Lab Showed Me

The model started at slope=0, intercept=0 - a flat horizontal line at zero, as wrong as it could be. Over 300 iterations it moved both parameters, step by step, each time in the direction that reduced the total error. By iteration 250 the adjustments were tiny enough to be essentially negligible.

The loss curve shape is what I found most instructive. It drops sharply at first because the model is far from optimal and almost any adjustment helps. As it approaches the minimum, each step has less room to improve and the gains shrink. That steep-then-flat shape is what convergence looks like - it's not a model-specific artefact, it shows up in training curves at every scale.

The two-parameter version here is simple enough that you can follow exactly what's happening. The same process running on a model with billions of parameters produces the same curve shape. The scale is different; the structure isn't.

What I Took from This

- Linear regression finds the line through data that minimises total squared error. The model has two parameters - slope and intercept - and training is the process of finding the values that make the error as small as possible.

- Gradient descent is how the model finds those values: take a step in the direction that reduces loss, repeat until convergence. It works without seeing the full loss landscape - it only needs to know which way is downhill at each step.

- The learning rate controls step size. Too large and the model overshoots. Too small and it barely moves. There's no universal right answer - it's chosen through experimentation.

- This mechanism generalises directly to larger models. The parameters are different in kind and scale; the training loop is the same.

- Because the model learns from data, the data is an attack surface. Poisoned training examples shift what the model learns, without any visible sign during training.