What Is AI, Really? Notes on Getting the Vocabulary Straight

These are my notes from working through the fundamentals of AI cleaned up enough to be readable, but written the way I think about the material rather than the way a textbook would present it.

Why the Vocabulary Matters

The first thing I noticed when I started studying AI is that nobody agrees on what the words mean or rather, they use all the words as if they're interchangeable when they're not. Artificial intelligence, machine learning, deep learning, neural networks, generative AI all appear in the same sentence, referring to the same system, in most mainstream coverage.

That's a problem. These terms describe different things, and the differences matter when you're trying to figure out what a system actually does, what its limits are, or what can go wrong with it. Calling everything "AI" is like calling every vehicle a "car" technically not always wrong, but not useful when you need to know if something has an engine.

My working rule: be precise about which layer of the stack you're talking about, and be sceptical when others aren't.

The Hierarchy

These are nested categories, not synonyms.

Artificial Intelligence

The outermost layer. AI means any system that performs tasks normally associated with human cognition recognising objects, understanding language, making decisions, planning.

The definition is intentionally broad because the field is old and the bar keeps moving. Chess engines were once the frontier of AI research. Nobody calls them AI anymore; they're just software. This pattern even has a name: the AI effect once something works, it stops counting as intelligence. What's left is always whatever we haven't solved yet.

For practical purposes, AI is the umbrella. Everything else sits under it.

Machine Learning

The key distinction here is how the system gets its behaviour. In traditional software, a programmer writes rules. In machine learning, the system infers rules from data.

A rule-based spam filter might check whether the subject line contains "FREE MONEY". An ML spam filter is trained on thousands of labelled emails and figures out the patterns itself the programmer never writes those rules explicitly. The programmer writes the code that does the learning; the learned rules emerge from the data.

This shift from explicit rules to inferred ones has a significant consequence: ML systems are opaque by default. Rule-based systems are readable. ML models encode their "rules" as numerical weights, which don't map back onto human language in any useful way. That opacity is a design consequence, not an accident, and it matters a lot when something goes wrong.

Deep Learning

A subset of machine learning that uses a specific architecture: artificial neural networks with many layers. The "deep" in the name refers to the depth of the network the number of layers not to any depth of understanding.

Deep learning is what drove most of the notable AI progress from around 2012 onwards: image recognition, speech transcription, language translation. The models require significant compute and large datasets to train, but the results are often substantially better than earlier approaches.

In practice, if someone says "we use AI" and the system deals with images, audio, or language, it's almost certainly a deep learning model.

Generative AI

A category of deep learning models trained to produce outputs text, images, audio, code rather than classify inputs or predict values. Large language models (LLMs) like the ones behind ChatGPT or Claude are generative AI. So are image generation systems like Stable Diffusion.

What separates generative AI from earlier ML isn't just the type of output. It's the scale of training hundreds of billions of words, millions of images which produces a kind of broad generalisation that narrower, task-specific models don't have. A model trained to classify spam knows a lot about spam. A large language model trained on most of the internet knows something about almost everything, which makes it more flexible but also less predictable.

Generative AI is the category most prone to misrepresentation, because the outputs look fluent and confident even when they're factually wrong. The model has no mechanism for distinguishing true statements from plausible-sounding ones.

The hierarchy in plain form:

A generative AI model is also a deep learning model, an ML model, and an AI system. But most AI systems deployed in the world spam filters, fraud detection, recommendation engines, search rankings are not generative AI. They're classifiers, or regression models, or some other form of ML that predates the current wave of language models by years or decades.

What AI Actually Does

Taxonomy is useful, but I find it more grounding to think about the tasks AI systems are built to perform. There are a handful of recurring patterns.

Classification

The system takes an input and assigns it to one of a fixed set of categories. Spam or not spam. Fraudulent transaction or legitimate. Which of ten thousand possible products is in this photo.

This is the most common form of deployed ML. A lot of what gets marketed as "AI" in enterprise software is classification under the hood. The model was trained on labelled examples; it learned to map inputs to labels; it now does that at scale.

Regression

Instead of a category, the output is a number. What will this flat sell for. How many units will we move next quarter. What's the expected failure time of this component.

Regression and classification are structurally similar both learn from labelled examples. The difference is the output type: discrete label versus continuous value.

Clustering

The system groups similar inputs together without being told in advance what the groups should be. Customer segmentation by purchasing behaviour. Anomaly detection in network logs by isolating records that don't fit any cluster. Topic modelling across a document collection.

Clustering is unsupervised there are no labels in the training data. The algorithm finds structure without being told what to look for. This makes the results harder to evaluate, because "correct" is less clearly defined.

Recommendation

The system predicts what a particular user will find useful or relevant, based on their history and the behaviour of similar users. Streaming platforms, e-commerce, social media feeds all of these are recommendation systems at their core.

What I find worth noting here is that a recommendation system is only as good as the metric it optimises for. A system built to maximise clicks behaves very differently from one built to maximise satisfaction. The optimisation target is a design decision, and it's often not disclosed.

Natural Language Processing

Systems that work with human language: translation, summarisation, sentiment classification, entity recognition, question answering. Large language models fall into this category, but so do much simpler rule-based text processors. NLP covers a wide range of sophistication.

Computer Vision

Systems that interpret images or video: detecting objects, reading text from images (OCR), tracking movement, analysing medical scans. Computer vision is one of the areas where deep learning produced dramatic improvements over earlier methods.

Generative Systems

Systems that produce new content text, images, code, audio. These are the youngest and most publicly visible part of the field right now. They're also the most architecturally complex, which is worth keeping in mind when someone claims they're simple to understand or control.

Why the Distinctions Matter in Practice

The reason I care about getting these categories straight is not terminology for its own sake. It's that different types of AI systems fail in different ways, and knowing which type you're dealing with tells you what to watch for.

A classifier can be confidently wrong. It produces a label. If you don't know the model's error rate on inputs like the one you're testing, you have no basis for trusting the label and the model itself gives you no signal about its own uncertainty unless that's been explicitly built in.

A generative model can hallucinate. The output is fluent and formatted like a factual statement. The model has no internal ground truth to check against. It generates the most statistically plausible continuation of the prompt; that's not the same as generating something accurate.

A recommendation system can optimise for the wrong objective. The model does exactly what it was trained to do. If what it was trained to do diverges from what you actually want, you get a system that performs well by its own metric while producing outcomes you didn't intend.

These are different problems. They call for different responses. Conflating them under "AI issues" makes it harder to think clearly about any of them.

The Opacity Problem

This is the thing I keep coming back to. Most ML systems are opaque by default not because anyone chose to hide how they work, but because the way they encode knowledge doesn't map onto human-readable rules. A deep learning model's behaviour lives in billions of numerical weights. Technically, it's all there. In practice, it's not interpretable.

This matters most when a system is making decisions with consequences. A fraud detection model flags a transaction. A hiring model scores a CV. A medical imaging model identifies a potential abnormality. In each case, the question "why did it decide that?" has a technically correct answer the numerical weights produced this output that is operationally useless.

The phrase "the model decided" is not an explanation. It describes where a decision came from, but it doesn't justify it or make it auditable. In any system where accountability matters which is most systems that affect real people opacity is a liability, not just an inconvenience.

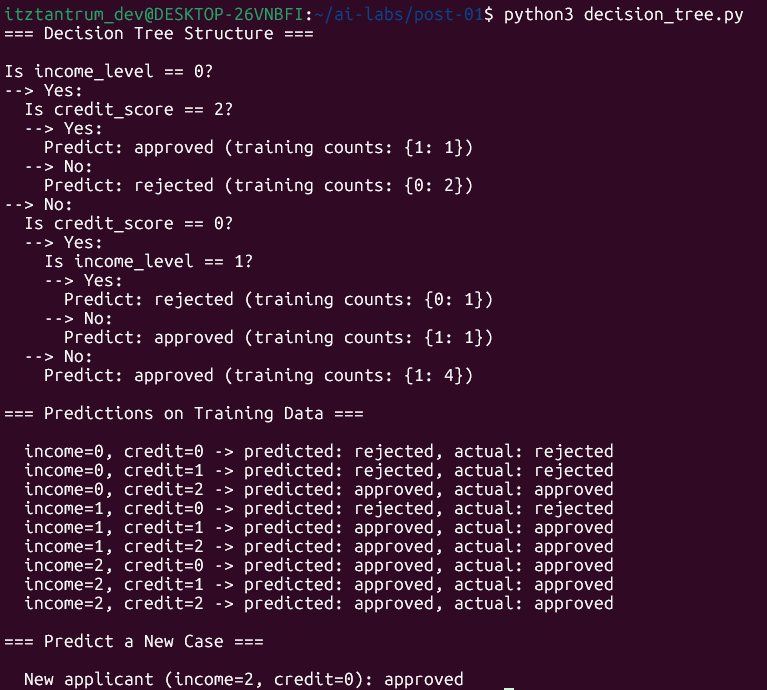

The decision tree in the lab below is interesting partly because it sidesteps this problem. It's a model you can read.

Lab: Building a Decision Tree from Scratch

I built this to make "learning from data" tangible rather than abstract. No external libraries just Python and the algorithm itself, written out explicitly so every step is visible.

A decision tree classifies inputs by asking a sequence of yes/no questions. The learning phase figures out which questions to ask and in what order, based on the training data. The result is a tree structure that you can read like a flowchart.

The dataset here is synthetic fictional loan applications with two features. The point isn't the domain; it's to see the algorithm work on data small enough to inspect.

Setup

# Create the script file

touch decision_tree.pyOpen the file in an editor:

nano decision_tree.pyStep 1 The Dataset

# Each row is a loan application with two features and an outcome.

# income_level: 0 = low, 1 = medium, 2 = high

# credit_score: 0 = poor, 1 = fair, 2 = good

# approved: 0 = rejected, 1 = approved

#

# Nine examples is tiny for real ML. It's enough to see the algorithm work.

dataset = [

# income_level, credit_score, approved

[0, 0, 0], # low income, poor credit -> rejected

[0, 1, 0], # low income, fair credit -> rejected

[0, 2, 1], # low income, good credit -> approved

[1, 0, 0], # medium income, poor credit -> rejected

[1, 1, 1], # medium income, fair credit -> approved

[1, 2, 1], # medium income, good credit -> approved

[2, 0, 1], # high income, poor credit -> approved

[2, 1, 1], # high income, fair credit -> approved

[2, 2, 1], # high income, good credit -> approved

]

feature_names = ["income_level", "credit_score"]

class_names = ["rejected", "approved"]Step 2 Measuring Impurity

The algorithm needs a way to measure how mixed a set of labels is. A set that's all one label is pure and maximally useful; a set that's evenly split is maximally uncertain.

Gini impurity quantifies this: 0 means perfectly pure, 0.5 means maximally mixed (for two classes).

def gini(rows):

"""

Gini impurity for a list of rows. Each row's last element is its label.

Formula: 1 - sum of (proportion of each class)^2

"""

total = len(rows)

if total == 0:

return 0

# Count how many rows belong to each class

label_counts = {}

for row in rows:

label = row[-1]

label_counts[label] = label_counts.get(label, 0) + 1

impurity = 1.0

for count in label_counts.values():

probability = count / total

impurity -= probability ** 2

return impurity

def info_gain(left, right, current_impurity):

"""

How much does this split reduce impurity?

Higher is better.

"""

proportion_left = len(left) / (len(left) + len(right))

return (

current_impurity

- proportion_left * gini(left)

- (1 - proportion_left) * gini(right)

)Step 3 Finding the Best Split

At each node, the algorithm tries every possible split every feature, every unique value for that feature and picks the one that reduces impurity the most.

def split(rows, feature_index, value):

"""

Split rows into two groups based on whether

rows[feature_index] equals value.

"""

left = [row for row in rows if row[feature_index] == value]

right = [row for row in rows if row[feature_index] != value]

return left, right

def best_split(rows):

"""

Try every feature and every unique value.

Return the split that maximises information gain.

"""

best_gain = 0

best_feature = None

best_value = None

current_impurity = gini(rows)

num_features = len(rows[0]) - 1 # last column is the label

for feature_index in range(num_features):

values = set(row[feature_index] for row in rows)

for value in values:

left, right = split(rows, feature_index, value)

# A split that puts everything on one side is useless

if len(left) == 0 or len(right) == 0:

continue

gain = info_gain(left, right, current_impurity)

if gain > best_gain:

best_gain = gain

best_feature = feature_index

best_value = value

return best_feature, best_value, best_gainStep 4 Building the Tree

The tree is built recursively. Each call either produces a leaf node (when no split improves things) or a decision node (when a useful split exists), then recurses on each branch.

class Leaf:

"""

Terminal node. Holds the most common class among the

training examples that reached this point.

"""

def __init__(self, rows):

label_counts = {}

for row in rows:

label = row[-1]

label_counts[label] = label_counts.get(label, 0) + 1

self.prediction = max(label_counts, key=label_counts.get)

self.counts = label_counts

class DecisionNode:

"""

Internal node. Holds a question and two branches.

"""

def __init__(self, feature_index, value, left, right):

self.feature_index = feature_index

self.value = value

self.left = left # rows where feature == value

self.right = right # rows where feature != value

def build_tree(rows):

"""

Recursively build the tree.

Stop when no split improves the impurity.

"""

feature_index, value, gain = best_split(rows)

if gain == 0:

return Leaf(rows)

left, right = split(rows, feature_index, value)

return DecisionNode(

feature_index,

value,

build_tree(left),

build_tree(right)

)Step 5 Reading and Using the Tree

def print_tree(node, indent=0):

"""Print the tree structure as a readable flowchart."""

prefix = " " * indent

if isinstance(node, Leaf):

print(f"{prefix}Predict: {class_names[node.prediction]} "

f"(counts: {node.counts})")

return

feature = feature_names[node.feature_index]

print(f"{prefix}Is {feature} == {node.value}?")

print(f"{prefix}--> Yes:")

print_tree(node.left, indent + 1)

print(f"{prefix}--> No:")

print_tree(node.right, indent + 1)

def predict(node, row):

"""Walk the tree to produce a prediction for one row."""

if isinstance(node, Leaf):

return class_names[node.prediction]

if row[node.feature_index] == node.value:

return predict(node.left, row)

else:

return predict(node.right, row)

# --- Run it ---

tree = build_tree(dataset)

print("=== Decision Tree Structure ===\n")

print_tree(tree)

print("\n=== Predictions on Training Data ===\n")

for row in dataset:

prediction = predict(tree, row)

actual = class_names[row[-1]]

print(f" income={row[0]}, credit={row[1]} -> "

f"predicted: {prediction}, actual: {actual}")

print("\n=== Predict a New Case ===\n")

new_applicant = [2, 0] # high income, poor credit

result = predict(tree, new_applicant)

print(f" New applicant (income=2, credit=0): {result}")Running It

cd ~/ai-labs/post-01

python3 decision_tree.pyOutput

What the Lab Actually Demonstrates

What the algorithm did was:

- Receive labelled examples

- Find the questions that best separate the classes

- Encode those questions as a tree

- Apply that tree to new examples it's never seen

That is machine learning, in its most interpretable form. The "model" is the tree. The "learning" is the process of finding the best questions.

What I find useful about this particular example is that you can trace every prediction. For the new applicant with high income and poor credit: income_level == 0? No → income_level == 2? Yes → approved. There's no ambiguity, no black box. The reasoning is fully visible.

That visibility is the main thing that disappears as models get more capable. A random forest is just many decision trees voted together and suddenly you can't read the reasoning anymore. A neural network is layers of weighted connections and the reasoning is essentially unrecoverable in human terms. More capability tends to trade away interpretability, and that trade-off is worth being deliberate about.

What I Took from This

- The AI/ML/deep learning/GenAI hierarchy is a nesting of increasingly specific categories, not a vocabulary of synonyms. Using them interchangeably loses information.

- Different categories fail differently. Knowing which type of system you're looking at tells you what failure modes to consider.

- ML systems are opaque by default not by conspiracy, but by architecture. That opacity has real consequences in any context where decisions need to be explained or audited.

- A decision tree makes its reasoning explicit. That's rare, and worth appreciating, before moving on to models that don't.